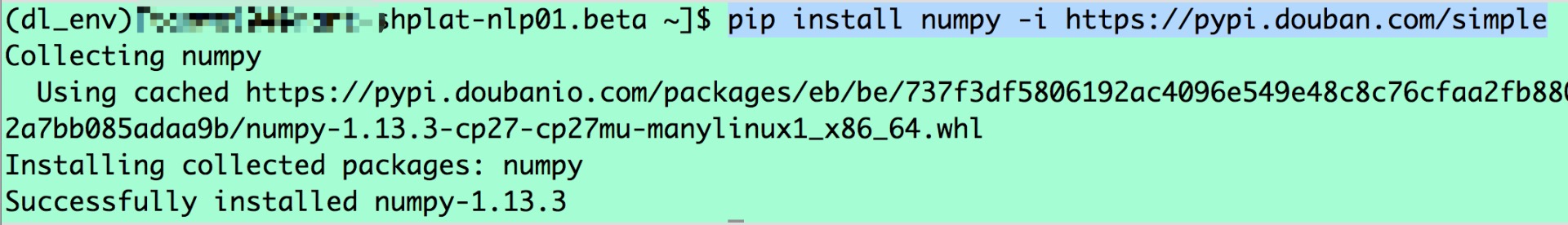

In the article we are using Jupyter Notebook for sample examples, the above image showcases how to install PySpark. While working with PySpark on the local machine we don’t have to additionally install Spark, the ‘pip install pyspark’ command will install Spark as well. Spark DataFrame is Immutable whereas Pandas DataFrame is mutable. Spark DataFrame supports fault tolerance whereas Pandas DataFrame doesn’t support it. Spark DataFrame supports parallelization whereas Pandas doesn’t as it’s for single machine tool. Processing in Pandas is much slower for a huge amount of data. Pandas is great when the need is to analyze the small dataset on a single machine, but when the need is to analyze much bigger datasets PySpark should be the choice because Spark RDDs are distributed whereas Pandas DataFrame is not distributed. There are a few important differences between PySpark and Pandas, if you have worked on Pandas but not on PySpark, it might be confusing as to why to work on PySpark when we have Pandas.

Major Differences between PySpark and Pandas The article introduces working with PySpark and highlights some of the important fundamentals of working with PySpark.

PySpark is a Python API for Apache Spark, PySpark helps in interfacing with Resilient Distributed Datasets (RDDs) in Apache, PySpark supports most of Spark’s features such as Spark SQL, Streaming, MLlib, and Spark Core.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed